The marketing professor prompt

The image generator has a gender bias.

When asked for a marketing professor, the system gave a man in a Harvard blazer with authority and gravitas. When asked for a female marketing professor, it gave a timid school teacher. That's misogyny embedded in image generation.

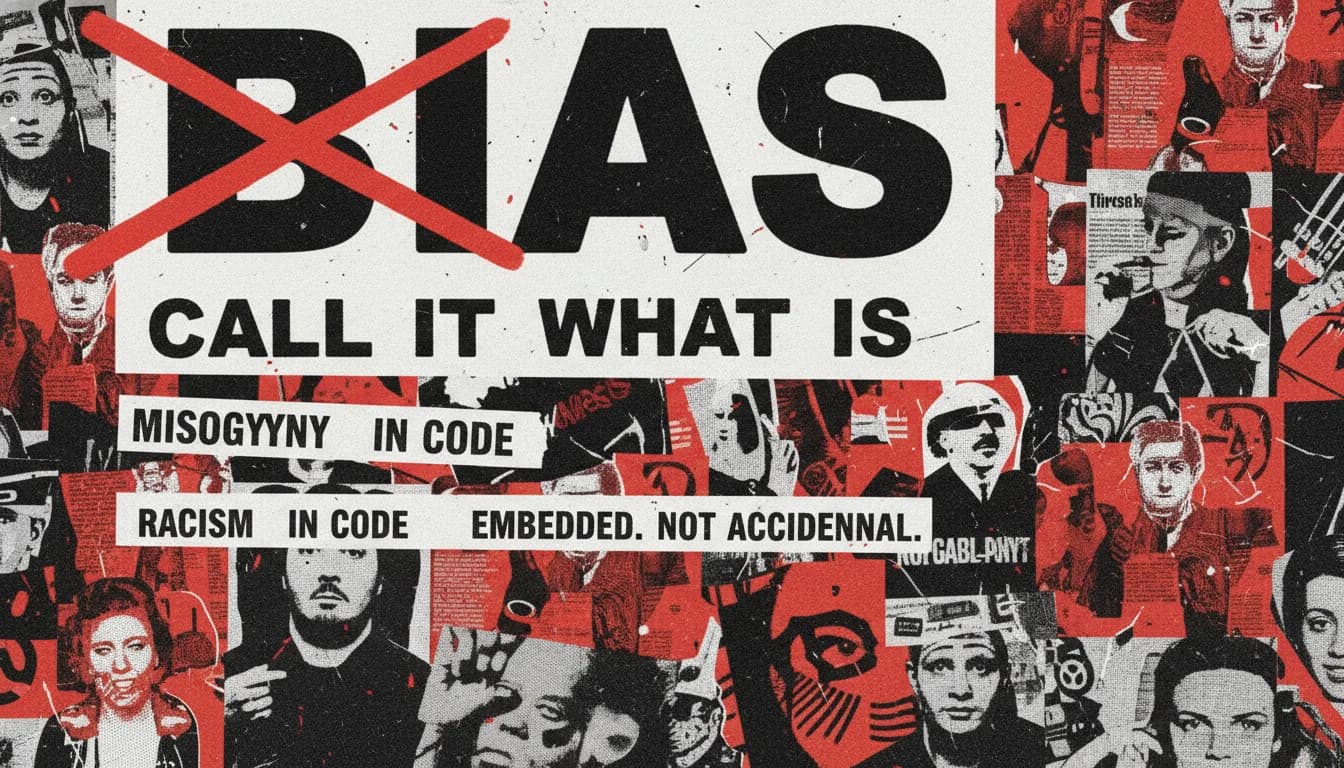

Bias is a math word. Misogyny names the actual content of the discrimination. Names point at people. Math points away from them.