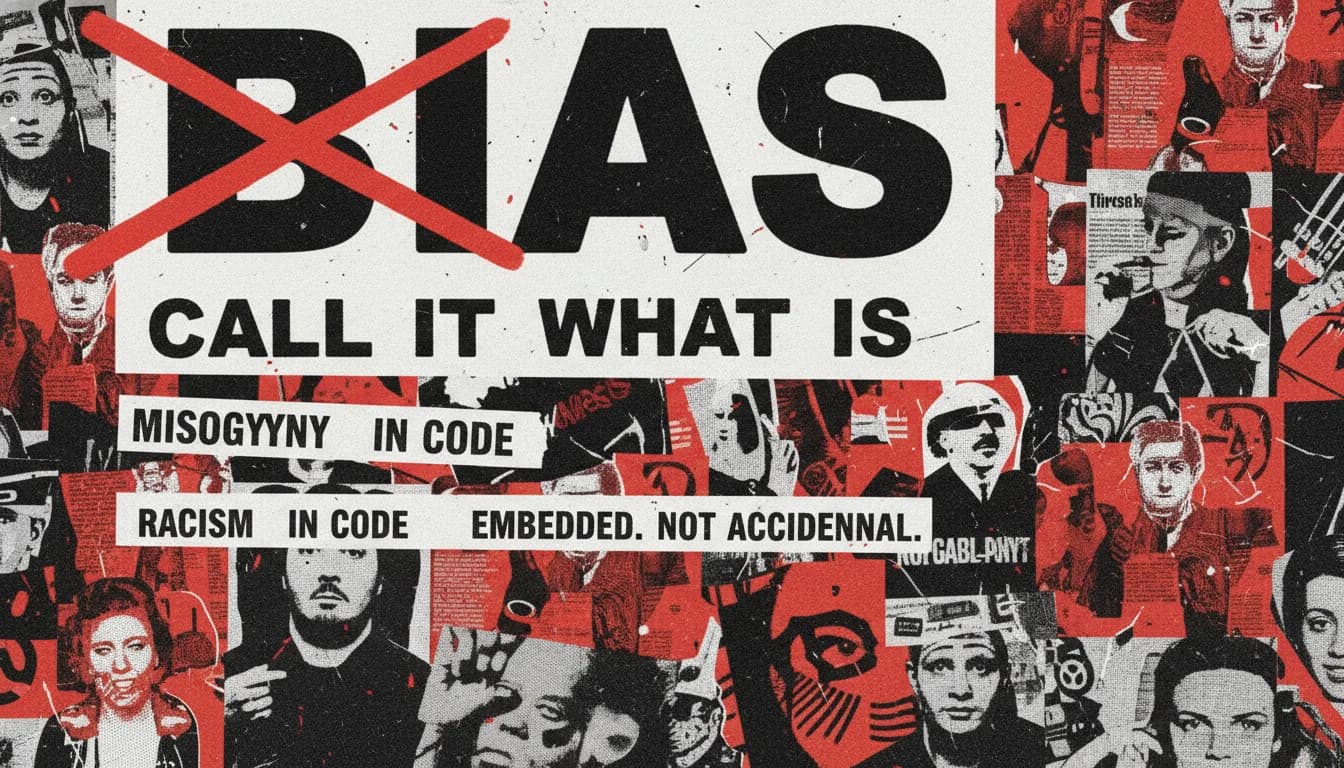

Paper · Slide 06b · Stop saying bias · 2018

Gender Shades

Joy Buolamwini & Timnit Gebru

The numbers behind Slide 06b. 34.7% error on darker-skinned women. 0.8% on lighter-skinned men. A 40× gap. The paper that made me stop saying 'bias' and start naming what I was seeing.

Extended notes

The numbers behind Slide 06b. 34.7% error on darker-skinned women. 0.8% on lighter-skinned men. A 40× gap. The paper that made me stop saying 'bias' and start naming what I was seeing.

Buolamwini's method is the move: disaggregate the metric, name the harm, refuse the laundered word. This is what 'name what you see' is asking you to practise on your own toolchain.

Discussion prompts

- 01Rewrite a vague critique you've made of an AI tool using only specific, named harms. What changes about who can act on it?

- 02Pick a model you use daily. Sketch the smallest possible Gender Shades–style audit you could run on it this month.

- 03Where does your team currently report aggregate accuracy? What would happen if you required a disaggregated breakdown alongside every claim?

Quick questions (from the library card)

- What does Buolamwini's auditing methodology unlock that aggregate accuracy hides?

- Which systems in your own toolchain have you never actually audited?

- What's the version of Gender Shades for the model you depend on most?